Welcome back to the Research Skill Center. In our previous session, Lecture 03, we unlocked the power of Autonomous AI Agents like AutoGPT to automate our data collection. It felt like magic, right? But as every professional researcher knows, magic can sometimes be an illusion.

Today, we are diving into the most critical part of your journey Lecture 04: Fact-Checking & Integrity. We are going to learn how to verify AI-generated citations, detect hallucinations, and ensure your work remains 100% accurate. If you want your blog to get AdSense approval or your academic papers to be accepted, this lecture is your shield against misinformation.

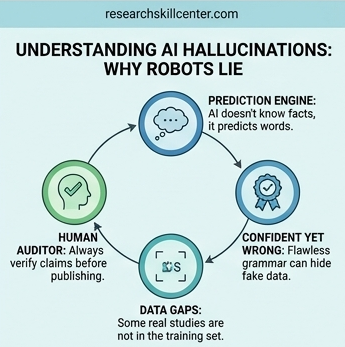

Why AI Lies: The Science of Hallucinations

To be a master researcher, you must understand that AI models (LLMs) are not encyclopedias; they are statistical prediction engines. They don’t know facts; they predict the next most likely word in a sentence based on patterns.

Sometimes, the AI wants to be so helpful that it invents a fact to fill a gap. This is called an AI Hallucination. It might give you a beautiful quote from a famous scientist that was never actually said, or a link to a study that doesn’t exist. In the world of Technical Writing and Academic Research, one fake fact can destroy your reputation. Our goal today is to make you a Fact-Checking Detective.

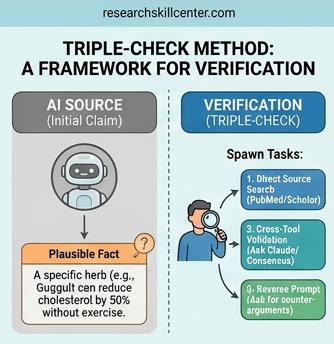

1. The Triple-Check Framework: Your Verification System

Don’t just take the AI’s word for it. Use the Triple-Check Method to ensure every piece of data is solid gold.

Step 1: Direct Source Authentication

If an AI gives you a citation (e.g., “According to a 2024 study by Stanford…”), your first job is to find the original document. Copy the title of the study and paste it into Google Scholar, ResearchGate, or PubMed. If the document doesn’t appear in these official databases, it is likely a hallucination.

Step 2: Cross-Tool Validation

Never rely on just one AI. If ChatGPT gives you a statistic, go to Perplexity AI or Consensus and ask: “Verify this specific data point: [Paste Data]. Provide the direct URL to the source.” Specialized research tools are much better at providing real-time, live-web evidence.

Step 3: The Reverse Search Technique

Ask the AI to disprove itself. Try this prompt: “Act as a critical auditor. Find any potential inaccuracies or conflicting viewpoints regarding the statement I just wrote.” This forces the AI to look for alternative data it might have ignored.

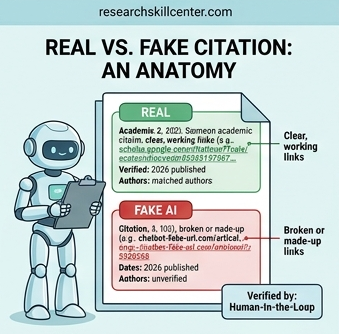

2. How to Spot “Fake” Citations and Dead Links

AI is famous for creating URLs that look real but lead to a 404 Not Found page. Here is how to audit your links:

The Domain Check: Look at the link provided. Is it from a reputable source like .edu, .gov, or a well-known journal like nature.com? If the domain looks strange or doesn’t match the topic, be careful.

The Author Audit: Search for the author’s name on LinkedIn or a University faculty page. Does this person actually exist? Are they an expert in this specific field?

The Date Trap: AI often mixes up years. It might claim a breakthrough happened in 2026 that actually happened in 2018. Always verify the Publication Date manually.

3. Human-in-the-Loop: The Key to Academic Integrity

At the Research Skill Center, we believe in the Human-in-the-Loop (HITL) model. This means AI does the heavy lifting of gathering info, but YOU do the thinking, verifying, and final writing.

Why this matters for SEO and AdSense:

Google’s algorithms are getting smarter at detecting purely AI-generated “fluff.” By fact-checking and then rewriting the information in your own unique voice (as we learned in Lecture 02), you create Helpful Content. This is what gets ranked #1 on Google.

Points for Integrity:

Verify every number: Statistics are the most common hallucinations.

Identify Bias: AI is trained on internet data, which can be biased. Always look for a neutral perspective.

Originality: Use AI to find the ingredients, but you must cook the meal yourself.

Summary and Practical Assignment

Integrity is the most valuable asset you have as a researcher. Tools will change, and AI will get faster, but the ability to separate truth from fiction will always be in high demand. By mastering these fact-checking steps, you aren’t just a “user” of AI; you are a Professional Analyst.

Your Assignment:

Ask an AI to give you “The top 3 scientific benefits of drinking blue tea with citations.”

Follow our Triple-Check Method to see if those citations are real.

Frequently Asked Questions

1. What exactly is an AI Hallucination?

- An AI hallucination occurs when a model generates information that sounds logically correct and fluent but is factually false. This happens because AI predicts the next “most likely” word rather than looking up a database of real-world facts.

2. Can I trust the links and citations provided by ChatGPT or Claude?

- No. AI models often hallucinate URLs or titles of research papers by mixing real authors with fake titles. You should always manually verify every link in a primary database like Google Scholar or ResearchGate.

3. What is the Triple-Check Method for verification?

- It is a 3-step audit process:

- Direct Search: Search for the specific claim or citation in a trusted database.

- Cross-Validation: Ask a second, search-grounded AI (like Perplexity) to verify the first AI’s claim.

- Reverse Prompting: Ask the AI to find “conflicting evidence” to its own statement to uncover hidden biases.

4. How do professors and editors detect AI-generated work in 2026?

- They use a combination of AI Detectors (like GPTZero or Originality.ai) and Process Checks. They look for “Too smooth” transitions, a lack of personal voice, and most importantly, fake citations that don’t exist in the real world.

5. Is it a breach of integrity to use AI for research at all?

- Using AI for brainstorming, outlining, and data synthesis is generally accepted. However, presenting AI-generated text as your own original thought without verification or disclosure is considered a breach of academic and professional integrity.

If you find a fake link or a fake study, share it in our community group so others can learn what a hallucination looks like!

Next Lecture: Lecture 05: The Final Project – Creating Your Own Automated Research Workflow.

Previous Lecture: Lecture 03: Autonomous AI Agents.

Pingback: Lecture 05: AI Content Optimization – Format Research for SEO and WordPress Readability - Research Skill Center